Applied Anime Video Super-Resolution

Abstract

Vintage anime, mastered for analog broadcast in the 1970s and 1980s, retains very little usable detail when upscaled bicubically to a modern 1080p or 4K display. CNN-based super-resolution networks trained on cartoon line art recover edges, restore texture in flat regions, and remove the colour banding that survives bicubic upscaling. This page documents an applied super-resolution pipeline run frame-by-frame on Candy Candy (1976) and Dragon Ball Z (1989), using pretrained anime-specific networks.

Method

The CNN super-resolution family (SRCNN from Dong et al. 2014, VDSR, EDSR, ESRGAN) shares the same skeleton: a low-resolution input is upsampled (sub-pixel convolution or transposed convolution), then a stack of residual convolution blocks refine the high-resolution prediction. The image is supervised with a combination of pixel-wise L1/L2 loss and a feature-space (perceptual) loss computed against a pretrained VGG network so that the output is sharp rather than just close-in-MSE.

The GAN variant (ESRGAN, Real-ESRGAN) adds a discriminator that distinguishes real high-resolution images from generated ones; the generator is trained to fool it. This is what produces the characteristic crispness in textureless regions that pure-MSE networks blur.

The training distribution does most of the work; running a face-photo-trained SR network on anime produces results that are sharp where anime is not, and soft where anime should be.

Stills: Original vs Upscaled

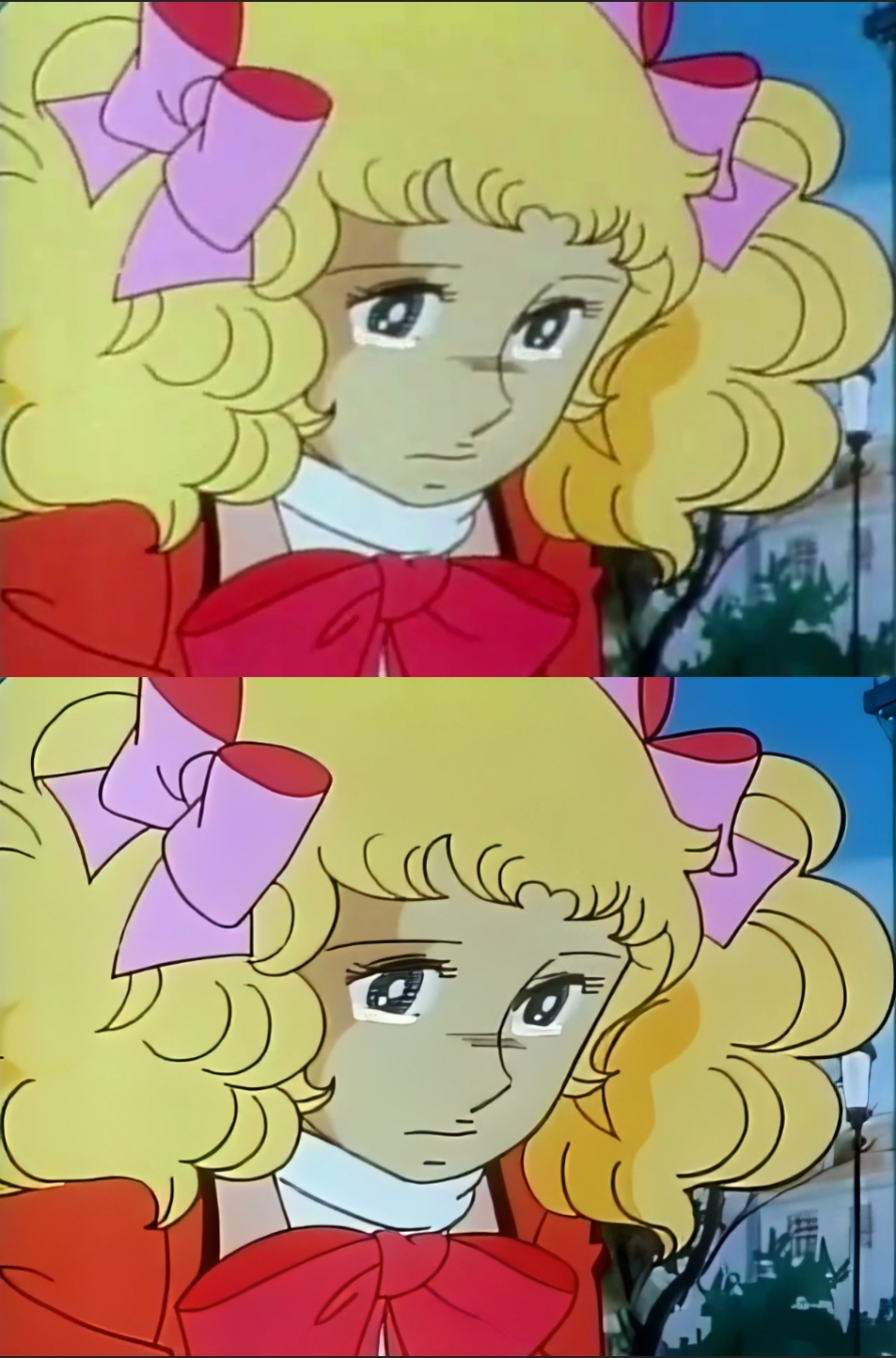

Each panel shows a low-resolution source frame and its CNN-upscaled version. Look for cel-line clarity, recovery of texture in skin tones, and the disappearance of the chroma-subsampling stipple in flat colour regions.

Candy Candy (1976): frame 1

Candy Candy (1976): frame 2

Dragon Ball Z (1989): Krillin

Dragon Ball Z (1989): Goku

Dragon Ball Z (1989): Gohan

Video Comparisons

Original (top half of each clip) versus upscaled (bottom half), encoded side-by-side for direct comparison.