Dynamic Word Cloud Generator

Abstract

A small text-corpus visualiser built as a teaching exercise on top of nltk, scikit-learn, and the wordcloud library. Tokens are weighted by TF-IDF rather than raw frequency so that domain-specific terms surface above generic high-frequency words. The renderer respects an arbitrary alpha mask (a Pokémon Arceus silhouette, a fall-leaf shape, a Mexico outline), and an animated variant updates the layout frame-by-frame so the cloud "grows" as the corpus is fed in. This is an exploration / demonstration project, not a research result.

Pipeline

- Tokenise & clean. Lowercase, strip punctuation, drop English stop-words from

nltk.corpus.stopwords, lemmatise withWordNetLemmatizer. - TF-IDF weighting. Run

TfidfVectorizeron the cleaned token stream against a background corpus (English Wikipedia sample). The score for termtin documentdis:

Wheretf-idf(t, d) = tf(t, d) · log(N / df(t))tfis term frequency ind,Nis total documents in the background corpus, anddfis document frequency oft. This dampens generic English words and amplifies domain-specific ones. - Mask-aware layout. Pass the binary alpha channel of an input PNG (Arceus silhouette, leaf shape, Mexico outline) into

WordCloud(mask=...). The layout engine packs words inside the mask; pixels with alpha = 0 are forbidden. - Animation. The dynamic variant feeds the corpus in chunks and re-renders the cloud each chunk. Frames are stitched into a GIF with

imageio.mimsave.

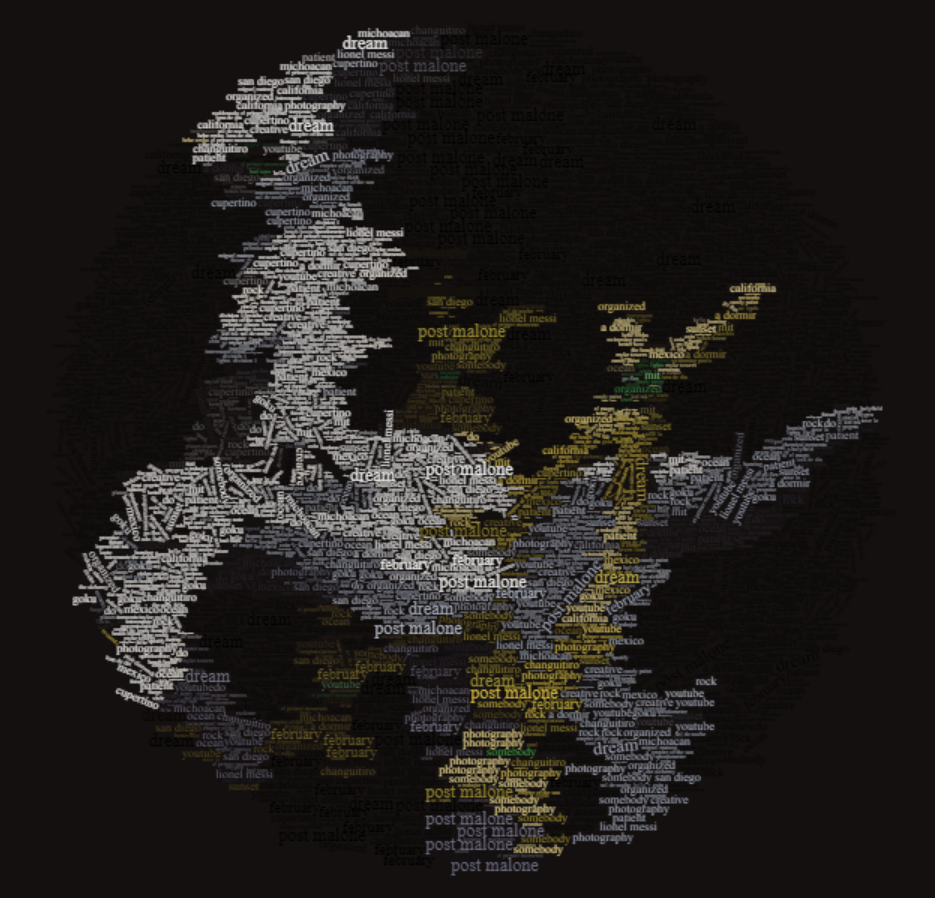

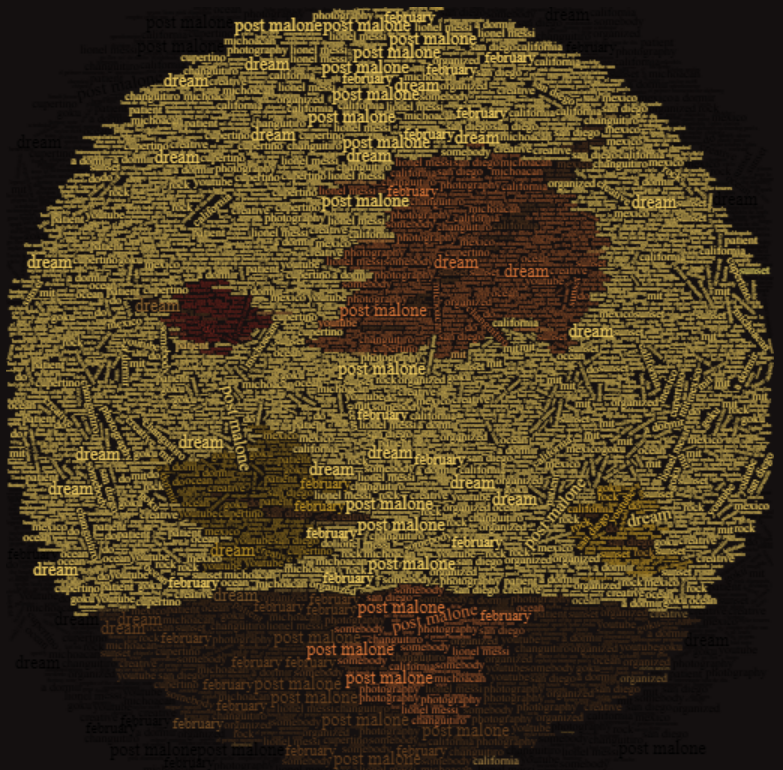

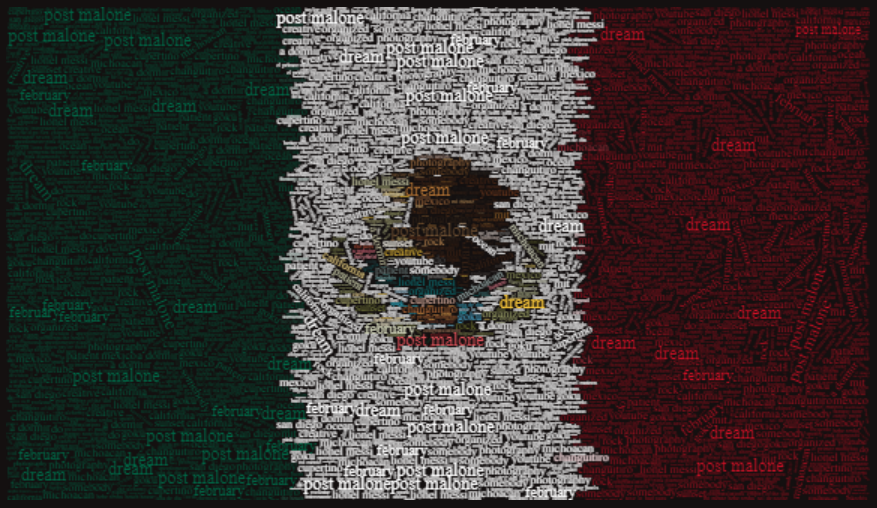

Mask Examples

Each mask is a binary alpha image. Static cloud (PNG) shows the final layout; animated cloud (GIF) shows the per-chunk evolution as the corpus fills in.

Arceus mask · static

Arceus mask · animated

Fall leaf mask · static

Fall leaf mask · animated

Mexico outline mask · static

Mexico outline mask · animated

Built collaboratively with Connor Carpenter, Ryan Lay, Samyak Karnavat, and Yash Shah.